We know lots of animals talk to one another, but how close are their howls and calls to human language? Or are those communications more like music?

Researchers at UC Merced dug into those questions in their latest paper, published this fall. They created a new method of sound comparison that they say lays important groundwork for future language study across species.

Cognitive science professors Christopher Kello and Ramesh Balasubramanium, along with graduate student Butovens Médé, scoured more than 200 animal, human and music recordings in search of patterns.

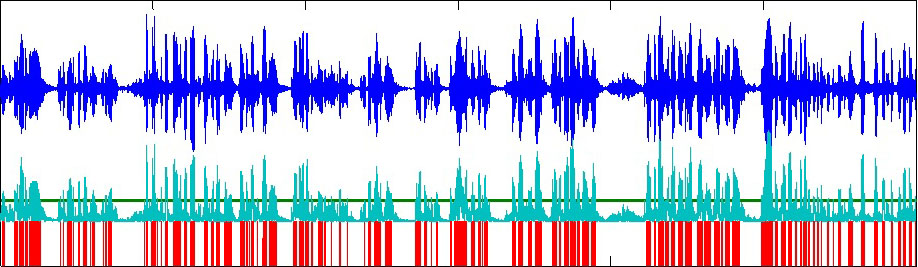

They were looking for what they call temporal hierarchy — the layering of the timing of sound. They start with a waveform and note every amplitude envelope, or volume peak, as a straight line. Viewed altogether, the lines form a sort of barcode.

The researchers start with a waveform (blue) and note every amplitude envelope, or volume peak, as a straight line (green). Viewed altogether, the lines form a barcode (red).

The researchers start with a waveform (blue) and note every amplitude envelope, or volume peak, as a straight line (green). Viewed altogether, the lines form a barcode (red).More volume peaks often indicate that the speaker is conveying a lot of emotion, or relaying extra information. Many barcode lines together create a cluster of sound energy, which happens so quickly that the human ear doesn’t catch it.

“Before this method of converting sound into barcode, we weren’t able to adequately compare music and speech, whale song and bird song,” Kello said. “It’s a new way to analyze sound.”

Take orca whales and humpback whales, for example. To the human ear, their songs might sound similar. But when converted into barcodes, the patterns are entirely different.

The orca’s sound clusters have a back-and-forth structure, much like human conversation.

Humpback whales, on the other hand, have a much more steady and predictable barcode. Kello says it has to do with the fact that they’re solo singers, and their songs have certain patterns. Humpback barcodes actually line up most closely with recordings of hermit thrush — also solo singers.

And when the researchers brought on music as a comparison tool, the work got even more complicated. They found that jazz music, due to its collaborative nature, looked a lot like human conversation.

Kello said this is new information in an age-old debate about the origin of music.

“Perhaps these two types of behaviors — our musical behaviors and our language communication behaviors — perhaps they co-evolved," Kello said. "Maybe they are tapping into the same type of abilities we have in our brains. And so this type of research maybe gives us a new piece of evidence, and new way to approach that very fundamental question about how language and music have evolved.”

There are nuances within human language, as well.

Comparing human speech to a Google Voice reading of the same words revealed a much more complex pattern in the human recording. And prior studies on this topic have shown that communication between parents and infants has a unique cluster pattern, but one that remains consistent across multiple languages.

Going forward, the researchers hope to use the barcode to automatically classify different types of sound and speech.

Loading...

Follow us for more stories like this

CapRadio provides a trusted source of news because of you. As a nonprofit organization, donations from people like you sustain the journalism that allows us to discover stories that are important to our audience. If you believe in what we do and support our mission, please donate today.

Donate Today